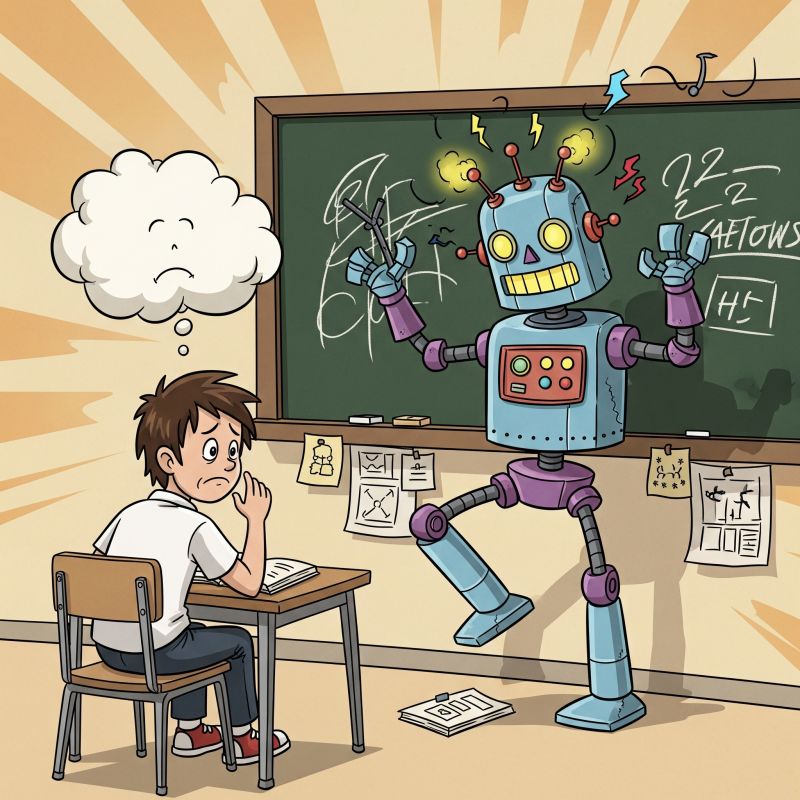

Lesson learned: for factual analysis, consumer-grade AIs like ChatGPT and Gemini can be dangerously unreliable.

The project: create an AI study partner for the California psychology licensing exam, feeding in over 1,000 pages of dense legal text plus strict rules to ONLY use the sources provided. And it had to be an off-the-shelf AI with a low price tag.

Simple, right? Well, witness this actual exchange with GPT-4.5:

🤖 GPT: “Penal Code § 11165.2(c) states that ‘serious emotional damage’ to a child constitutes child abuse.”

😅 Me (after checking): “I don’t see a section (c).”

🤖 GPT: “You’re right—there is no subdivision (c) in Penal Code § 11165.2.”

😅 Me: 🤬🤬🤬🤬🤬

This wasn’t a one-off. The results, in ChatGPT and Gemini Pro, were an utter disaster:

❌ Constant hallucinations

❌ Wholesale invention of laws

❌ Misinterpretation of existing codes

❌ Confidently citing fake sources

It was a powerful reminder that, if we use technology as a learning enhancer, two considerations are paramount:

WHICH tool we use

and

HOW WELL we apply our critical thinking.

In the end, the solution wasn’t a better chatbot, but a different type of AI tool designed for factual retrieval and analysis. More on that soon.

Meanwhile: what are your AI reality-check stories?