Sorry, but the answer might be yes.

Wait, don’t leave! This is a four-part series on how we can use artificial intelligence to our advantage!

Part 1 of this series, on Human Intelligence vs. Artificial Intelligence, is here.

This is part 2, on Artificial Intelligence, Machine Learning and Generative AI.

Part 3, Is Artificial Intelligence Smarter Than I Am? is here.

Part 4, How Can I Master Artificial Intelligence? is here!

(This series is based on a presentation I gave in partnership with Ribbon Education and NCADA in September 2023. If you’re interested in future presentations like this, follow me, Ribbon, and NACADA on LinkedIn!)

Part 2: Artificial Intelligence, Machine Learning and Generative AI

There’s a whole subset of artificial intelligence that focuses on learning, pattern-building, and prediction based on HUGE amounts of data. It’s called machine learning (abbreviated as ML), and it’s behind the artificial intelligence revolution we’ve all heard about recently.

If you want to train a machine to recognize a dog, you’d actually help it learn very much like the way you learned as a baby.

You’d feed a lot of images to the AI so it can learn from experiencing those images. In “supervised” ML you’d act like that helpful caregiver you had as a baby, telling the machine how to recognize what is and what isn’t a dog. In “unsupervised” ML it would figure it out by itself. (Supervised ML is the most widely used technique today.) Either way, the machine would build a pattern for recognizing dogs.1

As it develops its pattern, the machine becomes capable of predicting the success of its guesses. “This thing has a 67% chance of being a dog, based on all the pictures I’ve seen and the pattern I’ve learned, therefore it’s most likely a dog; this other thing has an 18% chance, therefore it probably isn’t.” Our brains don’t tend to mathematically calculate probability but they do work in a similar way when recognizing things: “that looks a lot like a dog, so it’s probably a dog.”

So that recent AI revolution I mentioned? It’s called generative AI (commonly abbreviated Gen AI) and it’s the technology behind chat-generating AIs like ChatGPT and Bard, image-generating AIs like DALL-E and Midjourney, and other systems that specialize in creating video animations, computer code, and other useful things.

Generative AI leverages machine learning to generate content. Basically it ingests huge volumes of data to learn patterns, and then predicts the best answer it can generate for a user’s request.

You interact with Gen AI through prompts. Prompting means writing text inputs that you give to the AI in order to get a useful response. Prompts become more useful as they become more detailed. Remember, Gen AI is a probability game. The computer will predict its answer with a higher probability of success if its prompt is crystal clear. So you want to think of your end goal. What exactly do you want the computer to produce? How long should it be? From whose perspective should it be created? Should it mimic a certain style? Your prompt is the description of the perfect end point, and the computer fills in the blanks.

So by building patterns of understanding based on massive volumes of images or writing, and then analyzing those patterns to determine what’s most likely to match your prompt, Gen AI systems create content based on probability:2 “What is most likely to match the prompt I’ve been given? Cool, I’ll create that!”

Here’s an example: If you prompt a Gen AI system like ChatGPT to write a weather report for your city in the style of Mark Twain, the machine will look back at all the examples of weather reports it’s seen, plus all the examples of Twain’s writing it’s seen, and (if it’s able to browse the internet) even look at tomorrow’s weather, and concoct an output that it predicts will best match your request. Like this.

(And in case you haven’t figured it out yet: DALL-E created all the images I’ve used in this series.)

So is AI-generated content original?

Well, it depends on how you look at it.

Generative AI creates new art based on probability calculations about what the user might want, but it bases that output on its prior learning. It might create images that look original, but the images are highly derivative.

Remember the baby and dog images I used in part 1 of this series? I prompted DALL-E to create them in “Tom and Jerry cartoon style.”

Here’s a comparison of DALL-E’s dog (on the left) to an actual dog (“Tyke“) from the original Tom and Jerry cartoon. DALL-E’s dog probably doesn’t exist anywhere else in exactly this form, but it’s also pretty derivative – note the smile, big eyes, puffy cheeks, etc.

But human-generated content is derivative, too!

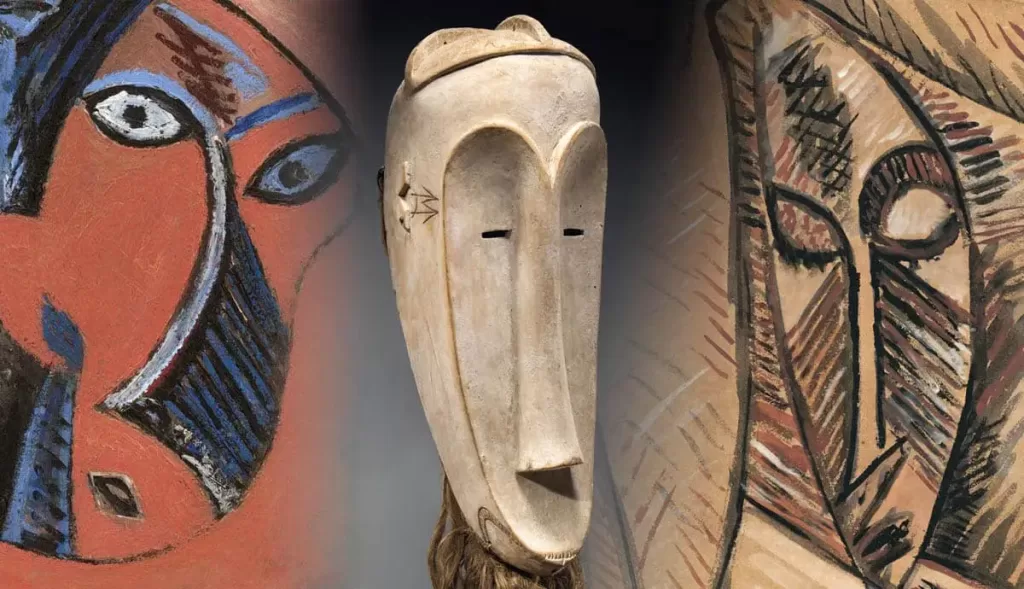

Lets use the example of Picasso. He’s generally thought of as a revolutionary artist and one of the creators of the artistic style Cubism.

At the same time, Picasso’s art was inspired partly by African artwork with its angular designs, by contemporary artists Cézanne and Rousseau and Toulouse-Lautrec, and probably plenty of other influences.3,4

so Picasso’s art feels inventive and different, but it also was influenced heavily by Picasso’s experience.

as such, it’s simultaneously original and derivative.

Picasso was even accused of plagiarism by a contemporary!5

So in a glass-half-full scenario, Gen AI creates original works in the way great artists do: by drawing from inspiration to create artwork that has never existed before.

And in a glass-half-empty scenario, Gen AI is a copyright infringement machine, and we’re starting to see some case law play out around this!6

Tune in for part 3 next week and learn just how intelligent AI is.

Want to know how I can help you or your team learn? Go here!

Need more great AI thoughts right away? Our friends at BrandSwan are thinking about “How AI Tools Are Weeding Out Mediocrity”

Footnotes

- https://mitsloan.mit.edu/ideas-made-to-matter/machine-learning-explained ↩︎

- https://www.rackspace.com/blog/distinctions-ai-ml-generative-ai#:~:text=AI%20encompasses%20a%20broad%20range,generate%20original%20and%20realistic%20content. ↩︎

- https://www.theartstory.org/artist/picasso-pablo/ ↩︎

- https://www.thecollector.com/picasso-and-african-art/ ↩︎

- https://www.moma.org/artists/4609#:~:text=At%20other%20times%20he%20provoked,the%20same%20time%2C%20completely%20new. ↩︎

- https://hbr.org/2023/04/generative-ai-has-an-intellectual-property-problem ↩︎